Who says they do ? They don't. They may have different model matrices, but the view- and perspective matrix are the same. @fleabay recommended to do everything in world coordinates (for now), to avoid having to switch around mentally between the coordinate systems.

I kindly ask to doublecheck the tutorials for example on learnopengl.com, or the latest Red and Blue book on OpenGL.

I hope a make no mistake now, this is the principle:

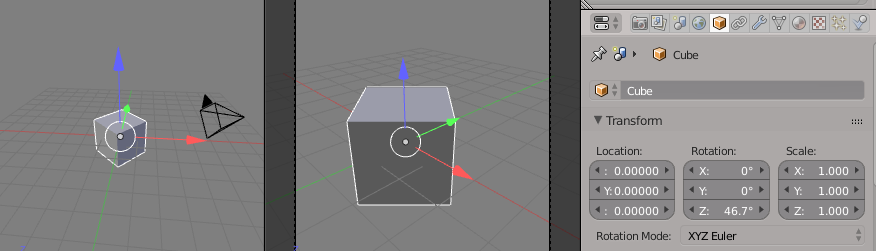

1. place objects in the world by applying rotation and transform to them. This is done with the model matrix for each object differently or grouped or whatever. One can place millions of objects this way. The model-transform puts the objects into world space.

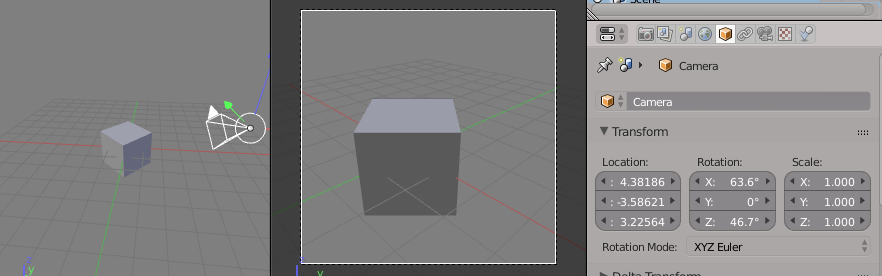

2. calculate (in world coords) a camera position, view direction and up vector. The camera can perform any gymnastics one likes, using trigonometry, inverse matrices, offsets, or simply put into place. After the calculation of the camera position, view and up vectors, calculate the view matrix (glm::lookat()).

3. create the projection matrix from viewport size and near/far plane (glm::perspective()).

4. multiply proj and view and model matrix together to obtain all positions in camera space. The number of objects is banana, they are all just positions in world-space. This step is usually/frequently/partly done in a vertex shader.

You are now pondering over point 2. How to calc the position of the camera.

Hope that was not totally incorrect ?